Building Superior Organic Search Results

Strategy-driven SEO

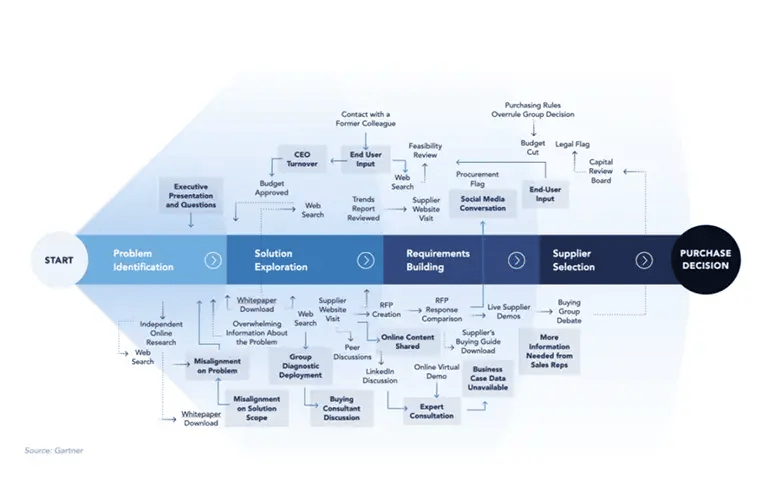

In recent years, marketing departments have almost universally depicted the customer journey as a funnel, with a customer following a logical line from awareness, familiarity, to consideration and purchase, ideally to loyalty. The near universal presence of businesses online, the abundant resources and interaction points presented by forums, chat sites, social media, etc., and, more importantly, the consumer behavior, has updated this model in numerous ways.

As a result, businesses have power over how customers and brands intersect. And through this, critical to nearly each phase of the journey is an SEO strategy that is able to divine customer intent and serve the brand’s core goals at the same time. Businesses able to harness SEO power to respond accurately will continue to grow, and continuously adapt models that best serve customers and brands.

Positioning SEO

Search Engine Optimization (SEO) is simply a method of increasing a company’s visibility and relevance in organic search results, leading pages to rank higher in order to increase site visits, and ultimately top-line revenue.

How Search Works

Each time someone performs a search, search engine algorithms select pages based on hundreds of factors that fall into two categories: relevance and authority. The pages selected for the Search Engine Results Page (SERP) must be relevant to the query, based on content topic, keywords, and other content-related factors. The order of SERP results are selected also based on their authority, or how trusted the pages are based on an array of factors and signals from backlinks to social signals. Search engines’ goal is to provide users with results that not only match their queries intent, but ones that provide accurate, trusted, and in-depth information. All SEO efforts are geared around these two factors.

SEO and the Customer Journey

New online behavior has stretched and reimagined the customer journey, from a prior linear approach of awareness to engagement (the ubiquitous “sales funnel”) with a product or service, to a seemingly endless approach to becoming a customer according to multiple recent studies covering the US and Western European countries. In many markets, a majority of customers never want to speak with a sales representative. In others, such as Business Intelligence and Analytics software, customers depend on them. Over 80 percent of customers conduct research before engaging with a specific company and their product (V12, 2019). But in cases where Amazon is part of the search, just over half of the customers will abandon a researched acceptable solution for a different one if available on Amazon. For many B2B industries, the buyer wants to complete more than half the research online, and then make the purchase offline (Wizdo, 2015). Finally, 2020’s pandemic-driven demand for online shopping may be the model journey for the short term, but is almost impossible to extrapolate beyond the unique impetus that produced this environment. Instead, in the last 6 years, the wide variety of paths consumers have taken before a new purchase can seem so unstandardized that no one is surprised that more than a dozen articles have been published heralding the “death of the customer journey.”

However, there are some recurring elements in recent customer journeys, including a much more organic path of further learning about the problem or need and searching the Internet until those resources are exhausted. Customers then often begin looking at different solutions, comparing user feedback and reviews on social media, and sharing thoughts with other consumers. Finally, they choose a brand to engage. To position a brand in this new customer journey–whether in unique model-less journeys or among those commonalities that are beginning to appear, a company must be part of a broad range of touchpoints: the initial searches around the problem, various solutions and comparisons, far before visitors decide on a vendor or business. Ultimately, a company must position itself throughout the many possible intersections of brand and buyer.

SEO within a Strategic Framework

SEO is critical to achieving visibility and traffic. A strong SERP presence is key to driving organic traffic and, if the prerequisite components of a business and marketing strategy are sufficiently fulfilled, an essential tool for increasing leads, conversions, and revenue.

But simply increasing traffic is not a useful KPI in itself; its financial relevance is dependent on selecting the right keywords: those that align with a carefully crafted value proposition that exceeds that of a competitor, those that are most likely to drive revenue. A successful SEO strategy is dependent on first accurately assessing the vital parameters of one’s own business. Then, one evaluates the competitive landscape and the KPIs of top competitors. Finally, the business creates a Marketing Strategic Plan that defines a company’s specific focus, target market, and the tactics (including SEO) that will transform the business goal to superior value for customers and company. Once a company has defined its competitive advantage, and its superior value proposition, it will know which keywords and queries to target.

An SEO strategy includes optimization in three general categories:

- Technical SEO: functional site characteristics of codebase and performance that enable search engines to find the site pages and help understand their meaning.

- On-page SEO: content depth, formatting, vertical placement, and keyword optimization of each page’s semantic qualities that search engines use to understand the nuanced topic focus and organization of pages.

- Off-page SEO: referring domains and backlinks that signal the trustworthiness and authority of the page for search engines’ evaluation.

Additionally, local-SEO is a specific set of practices enabling competitive ranking for searches of local intent (e.g., “office space near me”), and can often provide a singular local business it’s key differentiating SEO tactic.

Finally, SEO is not a one-time task. Instead, ongoing monitoring of key performance indicators, maintenance and repair should be part of all businesses’ SEO strategy.

Marketing Strategic Planning

Using a Framework to Achieve Goals The Value of Marketing Plans Cecilia’s Coffee Bean ...

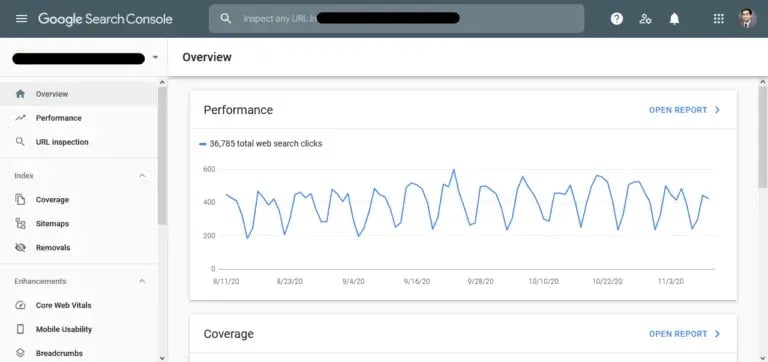

Technical SEO

A first step in preparing for technical SEO is to set up the website with basic tools required for all SEO. Set up and configure Google Search Console (GSC), Bing Webmaster Tools, Google Analytics, and an SEO plugin (especially for websites on a CMS). Google Search Console (GSC) provides quantitative data about how Google sees a website, enabling troubleshooting, corrections, and maintenance for improved search engine performance. To enable GSC, a site owner logs into his/her Google account, navigates to the GSC homepage and adds the site URL or domain as a property to be managed by the tool. After verifying site ownership, GSC begins tracking performance. GSC tracks clicks, click-through-rate (CTR), impressions, crawl stats; provides numerous reports measuring performance, indexing, mobile optimization, links, search analytics; and allows the addition of elements to the site such as sitemaps and structured data. For a similar utility with how Microsoft Bing sees a website, companies can set up sites using Bing Webmaster Tools.

Google Analytics is a basic but powerful free version of Google’s analytics platform. Google Analytics 360, a more advanced analytics package, is tier priced by pageviews, and provides additional features such as greater integration, data refresh frequency guarantees, and more sophisticated analytics. Many businesses will find the basic version sufficient, but information is available at numerous sites to compare features and determine if the paid version is worthwhile for a business. Basic setup of Google Analytics is simple, and the platform includes a help system for specific configurations. Google Analytics enables ad campaign measurement, goal tracking (different types of conversions), audience analysis, and a host of other analytics powered by detailed visitor interaction tracking and is used by more than 65% of the top 1 million sites.

Depending on a website’s platform, it may benefit from, or even require, an SEO plugin. Sites using Content Management Systems (CMS), such as WordPress, benefit from an SEO plugin to dynamically manage and optimize the website.

Additional utilities that SEO professionals have popularized include Ahrefs, Screaming Frog, SEMrush, and BrightEdge. However, hundreds of other SEO tools are available to fulfill almost every need.

For search engines to rank a website or page, it must first find the site, scan pages to determine keywords and content, and add them to its database of all the web content. Setting a website up correctly without technical glitches, and adding elements that help the search engine perform these tasks, ensures the site is available for indexing whenever a search engine crawls the website.

Security

At this point, all businesses should be using HTTPS encryption. Since 2014 when Google made HTTPS a ranking signal (Google, 2014), most websites have implemented the protocol. At this stage, most visitors will abandon a cart not marked secure (Big Commerce, 2018).

Sitemap Files

As expected, a sitemap lists all site URLs, and helps search engines determine what to index and which pages are most important. The sitemap is a list of URLs that indicate which content should be crawled and indexed. Various tools are available for sitemap creation, after which GSC and Bing Webmaster Tools are used to submit the sitemap file.

Robots.txt File

The robots.txt file informs the search engine which pages a site owner does not need indexing, such as a part of a site undergoing maintenance, or a site’s private pages, or duplicated content. Pages containing duplicate or near duplicate content (e.g., printer-only pages) are seldom listed in search results multiple times; as a result, reusing content published from other sources will negatively impact the page’s organic visibility and traffic.

Structured Data

Structured data is a technique that allows Google to supplement web results with Rich Snippets, textual and visual display with additional semantic value for search engine users (and for the listing owners). By using a standardized format to identify additional information about a webpage, site owners can categorize page type, and markup content components, creating information modules that stand out. While the exact ranking impact of Rich Snippets is unclear, the user experience is enhanced with easy to grasp content, and will likely increase click-through-rate (CTR) for certain keywords and pages.

URL Structure

Complex, lengthy URLs are challenging for search engines to interpret accurately, and can dilute the visibility of a keyword embedded in a long string of text. Instead, short URLs with a clear keyword are optimized for a search engine, and increase clarity of a site’s navigational structure. More importantly, URL and folder structure ensures that relevance and authority flow downward to other pages below a heavily linked and visited top page. As an SEO tactic, creating a consistent structure informs the search engine that these pages flow from the top level, and the equity passes on to them as well.

Broken Links

Broken links impact the user experience, as well as the ability of search engine indexing. A broken external link might not work because the website no longer exists, the page has moved without a redirect, or the target site’s URL structure changed. This naturally occurring atrophy is sometimes called “Link Rot,” and is detected by a number of site maintenance tools (e.g., Screaming Frog, GSC, etc.). Fixing these broken links improves the visitor experience, and search engines find content without crawling repeatedly to hit a dead page.

Broken internal links, conversely, are usually the site owner’s (or webmaster’s) fault and responsibility. Simply mistyping links or restructuring a site without using a URL mapping process can have a ripple effect, especially if a link was pointed to a reusable element in a template that has now moved. Interlinked pages can create link equity by passing SEO value from one page to another; consequently, owners of large or complex sites use a URL mapping tool or template to ensure structural changes do not break internal links.

Orphaned Site Pages

All pages on a site should be linked to, from at least one other page. Without internal linking, search engines will likely not crawl the page from other links on the site. Consequently, any equity from other pages is unshared, without context for meaning, and therefore organic visibility is lower. If the page is also missing from the sitemap, it will go unindexed. Orphaned pages can be found by using Google Analytics to compare crawlable URLs to Analytics URLs (Google Analytics will capture any page visited as long as the page has a Google Analytics tracking snippet).

Page Depth

Ideally, user accessible page depth should be limited to 3-4 clicks deep. Sites going beyond this may be overly complex for both visitors and search engines, and should be optimized to improve navigation and conceptual clarity.

Page Speed

Load time is an indicator for both the user experience and search engine quality. Both GSC and Bing Webmaster Tools will show current page load times. Comparing load times among the pages can help identify specific problems that may require one or more changes: image and video compression, overused redirects, or even server response time. Over half of a site’s visitors will wait 3 seconds before abandoning the site (Hosting Manual, 2020). While always critical to a visitor, page load time will be part of an overall user experience metric of a new Google ranking algorithm expected in 2021 (Schwartz, 2020).

Mobile Optimization

While mobile optimization is also a focus of the coming Google “experience” algorithm, it has been a prominent factor long before Google moved to mobile-first indexing in 2019. Reviewing any traffic statistic by device makes a clear case for mobile optimization by the volume of organic traffic at stake–over 60% of all searches are conducted from a mobile device (Statista, 2019). Using a mobile readiness tool like Google’s Mobile-Friendly Test provides a basic test, but optimization includes a wide variety of implementation approaches, including major site configuration approaches (e.g., responsive design), shortened titles and descriptions (including meta tags), structured data (so rich snippets stand out), and local optimization (if the business has a local presence).

On-Page SEO

Strategic Context Creates Keyword Value

To gain value from increased visibility and organic traffic, a company must ensure that both align with a larger strategy that analyzes key competitors and the linking of goal, strategy, and tactics.

In order to maximize organic traffic value, a company must ensure that their keyword strategy aligns with their larger strategy, a value-proposition informed by a competitive analysis and strategic framework. In other words, the targeted keywords must be of high value to the company goal, strategy, and tactics that created the flagship product or service sold. Once these are defined, a company needs to find the keywords and queries that represent the intent most closely connected to the company’s offering, and to the topics of the website, its sections, and its pages.

Keyword Research and Analysis

Finding the right keywords and queries requires researching search trends, search volume, and competition. Creating buyer personas helps identify the most important topics within the business, the questions buyers want answers to, and the queries most likely to align customer intent with company offering. Prime keywords for attention are the highly competitive keywords that drive to leads, conversions, or sales. Companies with online history should verify keywords for which they are already ranking. Using 3rd party SEO tools (e.g., Ahrefs, BrightEdge, SEMrush, etc.), they can quickly search through the organic traffic to find keywords customers currently use to find the business. If goals and conversions are configured in an analytics package, a business can quickly identify exactly what has driven recent conversions.

When researching new keywords, successful companies find which search terms competitors are using, identify their own gaps, and implement keywords that competitors successfully use in a shared market. Competitor analysis can lead to new high-value keywords, and should be conducted regularly, even weekly depending on the business.

Other keyword research approaches include connecting with internal customer-facing staff, and researching long-tail keywords. Often a company’s Customer Support or Sales Department can provide actual customer queries received by their respective departments, however these are less quantifiable than captured search data. Long-tail keywords, the more specific keyword phrases queried by searchers, get much less traffic, but will be less competitive and often convert at a higher rate. Finally, many SEO tools (e.g., Ahrefs, SEMrush) can be leveraged to identify long-tail keyword variations.

When identifying a set of likely keywords, a business needs to analyze each for relevance, authority, and keyword competition. The relevance of a keyword reveals how well it answers the user’s need (or rather, intent), and the site page content should function as the best answer for the user. The keyword must also come from an authoritative source, so the website and even specific page must earn authority mainly through its link profile, driven by the backlinks from high-authority pages. Finally, high-volume keywords reveal how popular specific terms are to describe a searcher’s intent. Low-volume may also accurately convey this intent, but will lead to few conversions. Businesses can be successful by assessing the spectrum of keywords in a topic, gaining from “low hanging fruit” even if it’s not a particularly powerful revenue driver. Businesses typically do not optimize for low volume keywords.

User Intent

Today, user intent behind a keyword query is a ranking factor businesses must evaluate in the keyword research. The website should solve a problem or need the visitor intended more than just being a page about a specific topic the keyword represents. One way of determining possible intent of a keyword is entering it as is into a search engine and reviewing the different types of topics that return. A more complete analysis requires assessing the intent of pages that rank for search terms, and ensuring the pages align with the search intent.

Understanding Google’s RankBrain

Why it matters 2015 marked a turning point in Google’s search engine history— for the ...

Branded vs Contextual Search Terms

If a business dominates or establishes itself in a market well enough that brand searches are common, they should have a knowledge panel, a type of rich result Google displays that contains a mini wiki of information with media elements and links. Knowledge panels can be claimed by the company with a Google Brand account.

However, businesses with unique names should not have difficulty in owning branded search terms. It should dominate page 1 of the brand’s key search terms. However, businesses need visitors to find their sites not only when they search for their brand–that’s a given–they need to find the business when searching the product or service category: contextual searches. When someone searches for the brand, Neospice, an online seller of gourmet, rare, or quality imported spices, they will likely find Neospice in the SERP due to its unique name. What businesses need is SERP ranking for the contextual search: “gourmet spices” or “rare imported spices”.

Keyword Optimization

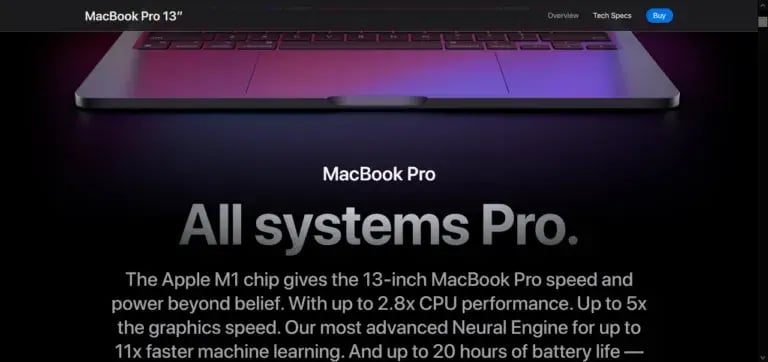

Keyword Cannibalization

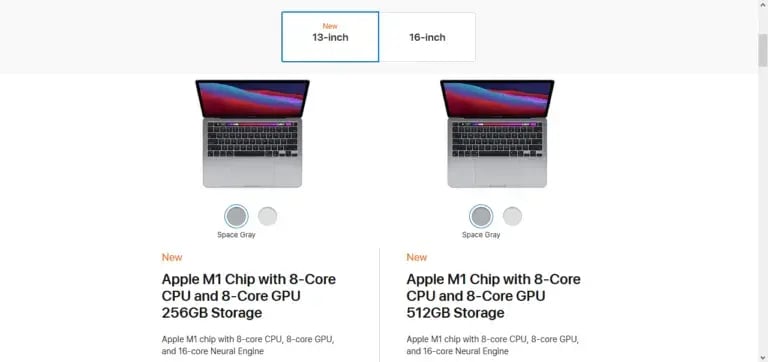

Keyword cannibalization is an SEO issue that is easily misunderstood. Simply put, a website that has multiple pages optimized for the same keyword, and competing against one another for the same search query reduces each other’s ranking in the same SERP. When two pages of the same topic exist on a domain, Google cannot determine which one should rank. Determining if this is, in fact, occurring requires a simple query in Google: site: domain.com “keyword”. If the two pages appear in positions 7 and 8, the pages are probably competing against each other. If a company site, particularly blogs, has a long active history with many posts centered around narrow topics, the possibility exists for keyword cannibalization. The solution is simply to decide which to keep, then combine or delete.

Yet this does not mean multiple pages on a site cannot be optimized for the same keyword. Instead, cannibalization occurs when the pages also convey the same intent. For example, Apple has two pages optimized for “Macbook Pro” on their website, both with the functionality to allow purchase. However, the first page shows two models side-by-side (13” and 15”), each with a “Buy” button and a “Learn More” link. The second page also has three 13” models side-by-side with the detailed specs underneath each with options to select features (e.g., 128GB or 256GB SSD). The first page is aimed at someone learning about the Macbook Pro early in the sales cycle, but the second is targeting a buyer at the purchase point. The first is informational, while the second is transactional. The intent is different.

Title, H1 and Meta-Description Tags

Search engines use title tags, H1 tags, and meta-tags to understand a page topic and to display a title and description in a SERP. Tags should always be carefully and concisely written, and clearly indicate the content referenced. Both title tags and description tags should be limited in length; however, truncated title tags should always be corrected and limited to 60 characters.

Title tags should include targeted keywords, ideally near the beginning. In a long page of search engine results, visitors quickly scan the results looking at nothing more than the page titles listed; as a result, the title’s ability to clearly indicate topic and purpose is a prerequisite for showing a clear match between search query and a page that responds directly to it.

The small blurb of text below the title in the SERP list is pulled from the meta-description tag. A missing description tag may interfere with what displays on the SERP. H1 tags should be present once per page, and also include the page’s main target keyword. In some CMSs, this tag is automatically the page title.

URL

Main pages, for example, those at the beginning of a content section, should include the keyword in the URL when natural, and as little else as possible. If a business capitalizes on importing spices, particularly ginseng, the website should not be www.andersonfl.com/indiaimport/47/ginseng74.html but rather www.neospice.com/products/spices/ginseng opening to the page for the spice.

Alt tags for Images

Because search engines see only text and links, an informative image, especially with an important keyword, is invisible unless identified by a descriptive filename, or ALT-tag (designed for accessibility, but functions as an SEO signal as well). Moreover, designing the site for accessibility is valuable for a diverse set of visitors, but may help comply with legal accessibility requirements as well.

Semantic Keywords

Semantic keywords, or semantically related keywords, or those words that provide contextual indicators for a keyword. Considering user intent behind every query means understanding how a keyword is being used, e.g., is “lemon” referring to the fruit, or to a particularly bad model of automobile. Using semantic keywords of “used cars,” or “guarantees” or “recall” signal that “lemon” in this case is not a fruit.

It’s a good idea for the targeted keyword to appear early in the body of text as well. If a page is focused around a specific topic, the representative keyword should be readily apparent in the narrative or descriptive text.

Audit Content and Prune

SEO practitioners often recommend removing weak content (does not rank or provide value) if it will not be updated and improved. Regularly conducting a content audit (starting with a URL report sorted by clicks or conversions) and removing unnecessary content is time well spent.

Non-Keyword Optimization

Improve Internal Linking

An internally logical and interlinked site helps search engines see a site’s topic with more clarity, amplifying purpose and particular topic focus. In addition, visitors who can easily move through the site without returning to a main menu first will likely have a lower bounce rate (Shewan, 2018). Using distinctive (non-generic) and descriptive anchor text for links whenever possible will signal a specific connection to the linked page, rather than just a general similarity in topic area. Finally, adding just a few internal links from ranking authoritative pages elsewhere on the site improves the overall value by sharing link equity to other lower-performing pages.

Use External Links

External links may not create the SEO equity of receiving quality backlinks, but they can improve user experience value and increase the resource value of a site for a company’s target market, helping position a site as an authority by linking out to aligned and valued information when specific backlinks are currently unavailable.

Content Creation

While “content is king” may be a cliche, the signals search engines use to determine quality content not only lead to SERP ranking, but compel visitors to return, increasing popularity and equity ranking over time. Longer page length, coherent content structure, and frequent updates and additions assist a search engine’s ability to assess content quality.

Content Updates

Managing a large website is demanding with or without a CMS, but a universal challenge is keeping the pages updated and current. In some fields, such as technology products, six months can be a life cycle. However, of all the tasks that can improve rankings, refreshing content may be one of the simplest. The company will have current and accurate information, the new content will be captured and indexed in the next crawl, and the site will provide a better experience for visitors.

Referring Domains

When a website references another company’s website, and links to it for the benefit of their readers, this referring domain has created a backlink to the referenced website. When a popular website references another, it can increase the referenced site’s popularity. If the referring domain has high authority, it can add more SEO value by sharing link equity with the referenced site. The value of backlinks is a factor of both quantity and quality.

Backlink Quality

Backlink quality is a mix of the referring site’s popularity (volume of traffic), its relevance (topic similarity to the backlinked site), and its authority (trustworthiness). While the first two qualities are self-evident, authority is based on trust typically built up over time and is easier to achieve with a high profile national site. Having popular articles of national importance, especially breaking news, will create thousands of backlinks per day. While only a small percentage of these backlinks are from sites with authority, the high authority domains still number in the hundreds. At this point, one can say the site has strong domain authority.

Link Building

Backlinks can be built up through a wide range of practices, from sites that organically evaluate similar topics by providing editorial commentary, by a site reaching out directly to likely candidates for linking, offering to guest post on a relevant blog, or seeking profile sites that include links.

Determining great candidates for link building includes obvious qualities: sites with domain authority, similar topics with overlapping audiences, and significant traffic volume. Additional qualities include sites that have used guest posters in the past, as well as sites that have broken links directly relevant to pages on the link-builder’s site. This latter approach is possible due to the number of websites (even popular and authoritative ones) that at any given time have broken links–links that once connected to relevant content that has moved, changed URL structure, or simply gone away. Reaching out to the site’s webmaster with an offering to provide replacement content he or she can link to is beneficial to both parties. Similarly, some websites may already be mentioning the link-building site but not backlinking to it, providing another opportunity to reach out to the webmaster or owner for a linked referral. Several utilities (ScreamingFrog, Ahrefs, etc.) can find broken links in specific topic areas whose site owner can then be approached with a link offer.

A good starting point, however, is to run backlink checks on relevant websites, especially those linking to competitors. Sites that once referred to competitors can now point to the link-building site for significant advantage. Unfortunately, some webmasters are probably inundated with requests and may not always be overly receptive. However, if link-building site owners take the time to find the right contact, connect with a pleasant, helpful demeanor with convincing reasons to link, and keep the communication brief and to the point, a broken link building strategy can be practiced and provide additional quality links each month.

Local SEO

Local searches differ from national, not only because their target is nearby, but because the search intent is local, the focus is specific to the relevant location, and usually targets mobile users. As a result, search engines respond differently for local searches, and the SEO requirements to target local queries are based on their unique factors.

Local SEO Factors

Local searchers are defined by queries related to specific locations, and, while informational, quickly transition to transactional (over 75% of local smartphone searches for nearby shops visit within 24 hours). They want complete information: location address, hours, phone number, and customer reviews. Local searcher’s intent is fundamentally different, and search engines must interpret from a wide range of intentions. Consider:

“Coffee near me”

“Coffee in chicago”

“Best coffee”

Google might obviously note the first two queries are local intent, but the 3rd could be from a number of intents–looking for coffee review sites, top coffee to buy in a supermarket, or the best coffee near me even though unsaid. Usually, Google will provide coffee bars with a Local Pack or Map, while also providing coffee ranking sites. Recently searchers stopped entering their city or zip code and instead used “near me,” and now they are dropping “near me.”

Optimizing for local search requires considering user intent, getting enough information to act upon immediately–again, these are mostly transactional queries. Local searches, while still informational searches, quickly become transactional. Providing web searchers with a complete and current Google My Business, clear NAP on main site sections, and even customer reviews–businesses’ should provide a concise set of data points that allow searchers to make a quick decision.

Results can take the form of more complete information, such as that found in Google’s Knowledge Panel, Maps, or Local Packs, a search engine result that typically provides everything a person needs to select a contextually or brand searched business: a business’s name, address, and phone number (NAP); description and reviews; and operating hours. To take advantage of these Google features, businesses must complete and optimize their Google My Business.

Google My Business

A good example of a free local promotion opportunity is Google My Business (GMB), without which a company is leaving free and impactful local listings unused. Taking the time to optimize a business’s GMB means information in Google’s Knowledge Panel, Local Pack, and Maps can be carefully managed and leveraged for maximum benefit. GMB allows the collection and display of reviews, track multiple unique data points, and provide opportunities to optimize with added customer Q&A, preset attributes, and Google Posts (add on widgets to be used for specific time-bound messaging, including a Call to Action).

Monitoring

Companies invest substantial effort to set up the technical SEO site features, to develop and execute a comprehensive content strategy, and to build a large quantity of quality links. However, the increase in visibility, ranking, and quality organic traffic is a long-term commitment, and gains made will surely recede if an SEO strategy is treated as a singular initiative with an end-date. Instead, ongoing monitoring and maintenance are a part of any strategic view of organic traffic.

Tracking KPIs

Monitoring long-term SEO results requires ongoing attention to key performance indicators (KPIs), with more strategically mature organizations honing in on a custom set of KPIs that are most relevant for their current strategic stage. Common KPIs include movement of keyword rankings, share of voice, organic traffic growth, conversions from organic traffic, and user engagement (e.g., average time on page, bounce rate, or pages viewed). Companies with specific strategic initiatives may choose more granular indicators, such as drop/increase in cart abandonment, keyword ranking for individual targeted landing pages, or traffic from a specific or temporary referral channel.

Monitoring Platforms

While a good data analytics platform can provide most of the elements of importance to a site owner, a dashboard should be made available that includes the vast majority of targeted indicators at a glance, without requiring drilling down far into an application. Other tools, such as Google Data Studio, can extract from multiple data sources into custom dashboards and reports that stakeholders (e.g., senior marketing staff, the executive team) can use for quick summaries of KPIs and other high-value data.

Scheduled Monitoring

Businesses set monitoring and reporting schedules based on the reporting purpose and need of respective audiences and initiatives. Long-term business initiatives may need only a monthly dashboard or report to clearly see trends. Short-term initiatives (e.g., trying new channels, products or services) often require daily (or more often) review of relevant data points by the project team, allowing for responsive adjustments if issues arise, or as insights are gained. The executive team may need only the main 2-3 KPIs on a monthly basis, whereas the marketing team will likely review a larger set of indicators more often.

In addition to scheduled monitoring, tools such as Google Analytics can be used to create custom alerts for change thresholds in traffic, conversions, online sales and other impactful user interactions for a site. For webmasters or marketers responsible for one or more websites, custom alerts provide a safety net to ensure important trends are identified immediately.

Environmental Monitoring

The practice of monitoring outcomes usually includes performance measurement and monitoring of changes in the environment in which that performance occurs. In SEO monitoring, KPI tracking and analysis functions as the performance measurement, and ongoing competitive and technical environment scanning functions as a key environmental element to monitor.

Forward-looking businesses will monitor the competitive environment for changes, especially keyword ranking changes for top competitors, focusing on added and modified keywords that may illuminate gaps and opportunities to close them. Companies can run backlink checks each month, noting added backlinks as awareness of a potential new resource, or lost backlinks may be candidates for broken link building. The technical environment can include the companies scheduled infrastructure upgrades, or the search engine environment within which SEO occurs. Google updates its search ranking algorithm fairly often, and some websites can lose traffic volume overnight. For several days after an update, businesses should monitor traffic for changes to determine if corrective actions are required. Updates are tracked at Moz, Search Engine Journal, and Search Engine Land, usually with corresponding algorithm change descriptions.

Server and Website Availability (Uptime)

While most large companies maintain services to monitor and guarantee server uptime, parties responsible for marketing website success should be familiar with specifically which indicators are tracked or contracted (e.g., site availability, page load times, server performance), as well as any contingency planning. Multiple studies of large data centers, and international businesses point to multiple factors in unplanned downtime, such as cyber attacks/security issues, power/internet outages, and hardware failure. However, in about half of all incidents, user error was a contributing factor. In other words, failure to maintain security software and upgrades created vulnerabilities to cyber attack, misconfiguring server hardware or using hardware inadequate for the user load lead to crashed servers, and improperly installed hardware caused it to fail. Companies new to physical hosting services, or in-house departments experiencing capacity growth needs should conduct vendor or network research before selecting options for hosting critical websites.

Significant down time for a large organization during a peak traffic period and be costly, both for lost opportunity of the immediate sale, and for the longer-term loss of trust as a secure site.. SEO professionals working for smaller companies should be prepared to provide guidance for an uptime monitoring strategy and contingency planning that scales to the needs of the business.

Repair

Things break. Whether from seemingly unconnected internal changes or unforeseen environmental changes, high-value organic traffic can be interrupted by simple or complex factors, most of which can be repaired or at least resolved. Regardless of cause, issues must be resolved to bring traffic back to expectations. The reasons are many, from the accidental internal interruption to purposeful environmental changes. A business’s own unrelated technology changes impact a website element with SEO ramifications. External changes cause site elements to break (moved or retired external resource), and should be found by weekly 404 reports, or an alert set up to send notifications of 404s. Responding to a search algorithm change requires determining the effect and creating a solution or workaround that does not impact organic traffic, and, depending on the traffic impact, may require immediate action.

Maintenance

In addition to monitoring KPIs for enhancement, or fixing issues that arise as a result from error or environmental changes, companies must manage the ongoing longer-term processes that support and ensure steady growth in positive KPIs. Remember, maintenance does not mean a specific time frame (e.g., “every 3 months”); maintenance is a set of tasks required to keep SEO healthy and high-value organic traffic flowing.

Keyword Changes

Many SEO professionals recommend a quarterly keyword strategy review, but marketers can quickly review search volume for each keyword at any time. If volume goes down over time, search for keywords that are more relevant to the business’s specific customers’ queries and intent. If search volume has increased, businesses can leverage those keywords in other pages to help increase performance there. In addition to search volume, downward trends in SERP position can reveal keyword changes are needed. If keyword changes are made to pages, the tags and meta-descriptions must also change.

Content Refresh

One of the most common maintenance efforts is a content refresh–a relatively simple exercise to improve traffic and ranking. The marketing team can sort a URL Report by clicks or by conversions to determine which pages most need refreshing. Because refreshed content pages impact linked pages, marketers should verify that internally linked pages are still contextually related, as must be the backlinking pages from referring domains.

The frequency of content refreshes is determined by a business’s website- and page-level performance–and their competitors’ performance. The marketing team should know how often their high-ranking competitors are refreshing their content, as well as trends competitors exhibit during content updates. Frequent high-quality content updates are often required just to maintain traffic levels.

The Next SEO Cycle

Finally, how often a business chooses to undertake major SEO updates depends on their strategy evolution, and should come as naturally as strategic change: when it makes sense. Forward-looking business leaders are attuned to the needs of the customer, the business, and the technical-competitive-social environment, identifying trends and their ramifications to the business, people, and infrastructure connected to the business.

Successful businesses analyze the data–their own, their competitors’, and their environment–extract relevant and valuable insights, and respond creatively to the immediate and long-term challenges of each page/keyword set.

References

Google. (2014, August 6). HTTPS as a ranking signal. https://webmasters.googleblog.com/2014/08/https-as-ranking-signal.html.

Hosting Manual. (2020, Feb 4). 3 Seconds: How Website Speed Impacts Visitors and Sales. https://www.hostingmanual.net/3-seconds-how-website-speed-impacts-visitors-sales/.

Schwartz, Barry. (2020, May 28). The Google Page Experience Update: User experience to become a Google ranking factor. https://searchengineland.com/the-google-page-experience-update-user-experience-to-become-a-google-ranking-factor-335252.

Shewan, Dan. (2018, April 19). 11 Easy Ways to Reduce Your Bounce Rate. https://www.wordstream.com/blog/ws/2016/04/07/reduce-bounce-rate.

Statista. (2019, Sep 10). Mobile Search Statistics & Facts. https://www.statista.com/topics/2479/mobile-search/.

V12. (2019, Feb 27). 25 Stats on Consumer Shopping Trends. https://v12data.com/blog/consumer-shopping-trends-stats/.

Wizdo, Lori. (2015, May25). Myth Busting 101: Insights into the B2B Buyer Journey. https://go.forrester.com/blogs/15-05-25-myth_busting_101_insights_intothe_b2b_buyer_journey/.

RESPONSES